This article is a guest post written by Patrick Pellegrino, Senior Cybersecurity Analyst.

Threat hunting and discovery with Siren

Monitoring the threat landscape, that is gathering telemetry data about threats, together with current and emerging trends is quite challenging, but it is also a much-needed activity in any large organization.

Threat hunting, the discovery and research of threats, goes hand in hand with security monitoring.

A typical workflow can be:

- A specific threat is received, that is malware is found. Threat analysis reveals how the threat operates.

- Security monitoring then reveals if the signs of the identified threat are being observed at a network or endpoint level.

There are multiple categories into which a threat could fall, requiring multiple tools to be placed on the battlefield to assemble the required intelligence. In this scenario, connecting the dots of a malicious behavior and looking at the big picture are key elements in supporting the next defensive actions to understand, track and respond appropriately.

The playground for such analysis does not necessarily have to deal with the whole internet. Also, a corporate network with its security products and alerts fits perfectly the use case I am going to present.

One of the main goals is to create context and awareness about threats a company faces and trying to make sense out of them.

In the coming scenario, I have used Siren to uncover relations intuitively that would otherwise hardly be possible to notice across:

- OSINT malware reports.

- Social network platform messages.

- Static properties extracted from Windows executable files.

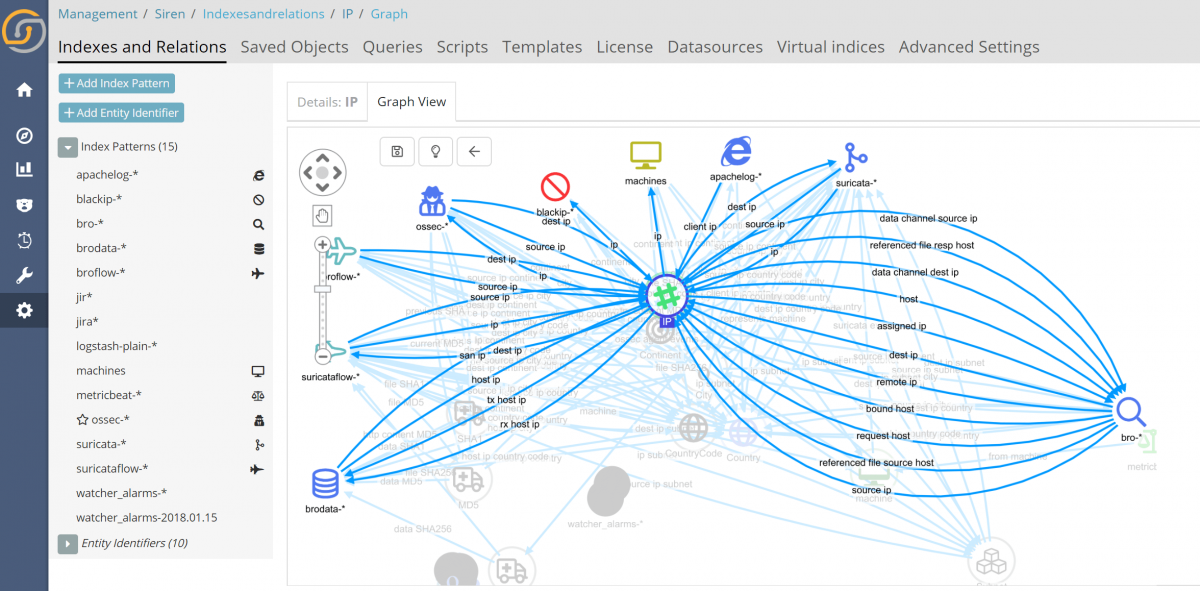

The key, as we will see, is to explain to the system how data is interconnected (how log sources are related to each other) using a defined data model, after which Siren will make data exploration and analysis easy and powerful.

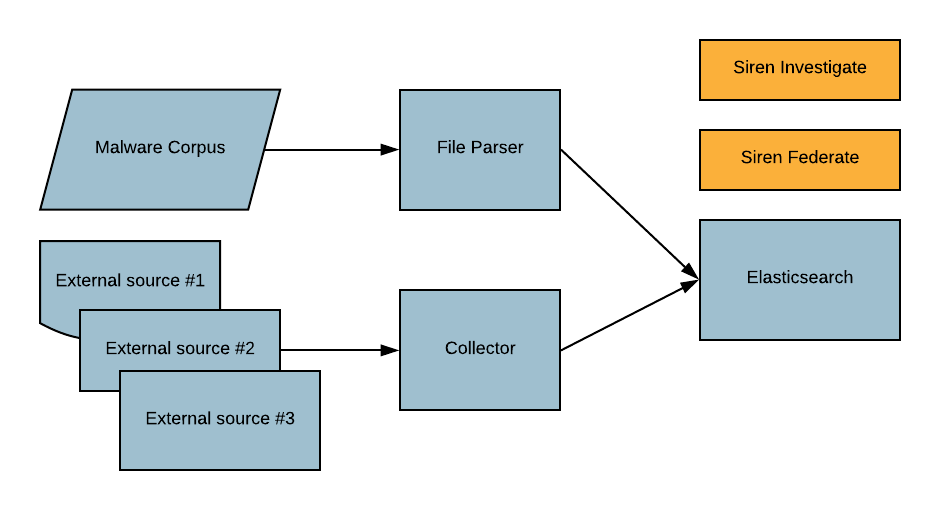

System architecture

It all starts with logs in a Siren enhanced Elasticsearch

Elasticsearch is a very natural storage for security related logs. With its ability to scale, search and drill down, it provides a great basis for the rest that will be shown.

Thanks to the Siren Federate plugin, these great base capabilities are augmented by the possibility of cross-index joins and record-to-record link analysis; at the heart of this, we find the data model.

System components

All data sources, listed above, are using their own structure to describe the delivered information, as such every collected element at some point of the data pipeline needs to be reshaped to fit the new internal data-model.

The whole process, from data collection to storage and afterwards, data-linking and visualization, is made up of multiple intermediate components. There are a total of three modules which are outlined below.

Collector: In charge of retrieving external feeds or raw data from multiple services.

Each feed fetcher is also re-shaping the incoming information into a new defined structure before sending the result into Elasticsearch.

It runs:

- Sandbox-feed.

- Social-network-feed.

File parser: Static processor for Windows executable files features extraction.

It runs:

- File-parser.

Storage: the main component for storing elaborated data coming from the Collector and File parser modules. It also leverages Siren for data exploration and analysis.

And finally, we have Elasticsearch (with the Siren Federate plugin installed) and Siren Investigate, the frontend.

Use cases

Keeping in mind the platform objectives and its components, a possible workflow chain boils down to this:

- Data exploration (only at the beginning for new added sources).

- Data discovery / Searching for interesting events.

- Filtering/Drill-down (on: collected data and external platforms).

- Samples download.

- Quick analysis (study the sample if gathered context is not enough).

- (Optional) Static and dynamic.

- (Optional) Manual interaction.

- Writing a detection rule for the sample / Install the new rule into any supported security system at the disposal of the company.

- Create a Siren Alert watcher for catching up new samples.

To introduce the coming use cases, let me first provide some background.

As stated originally, for the sake of the demo, the utilized malware reports are coming from a public sandbox system, Falcon sandbox, but the same can be swapped with any other equivalent technology.

Every time a new file is dissected by the public service, on completion a set of reports are generated while a machine-readable version of the same is also made public (feed).

Keep in mind that as a free service, the amount of information returned by the system for each analysis is limited. Therefore, looking for patterns in samples on the top of this data will sometimes yield fewer or no results compared to the sample features offered by the platform.

Yet again, the point is not to reinvent the wheel or create a feature already offered by the free service, but to present a way to connect heterogeneous log sources to each other and explore its content.

On the other hand, social network messages from specific Twitter users are also collected. The intent is to get notification about up-rising infections or interesting samples observed by the security community.

Last but not least, static properties from Windows executable files are also extracted from a local malware zoo. From the letter one we will be able to track samples with overlapping features; characteristics and, when available, link the same sample back to a tweet message or an online sandbox report giving more context to the file.

With this background in mind, let’s explore some use cases.

Case #1 — GoScanSSH malware

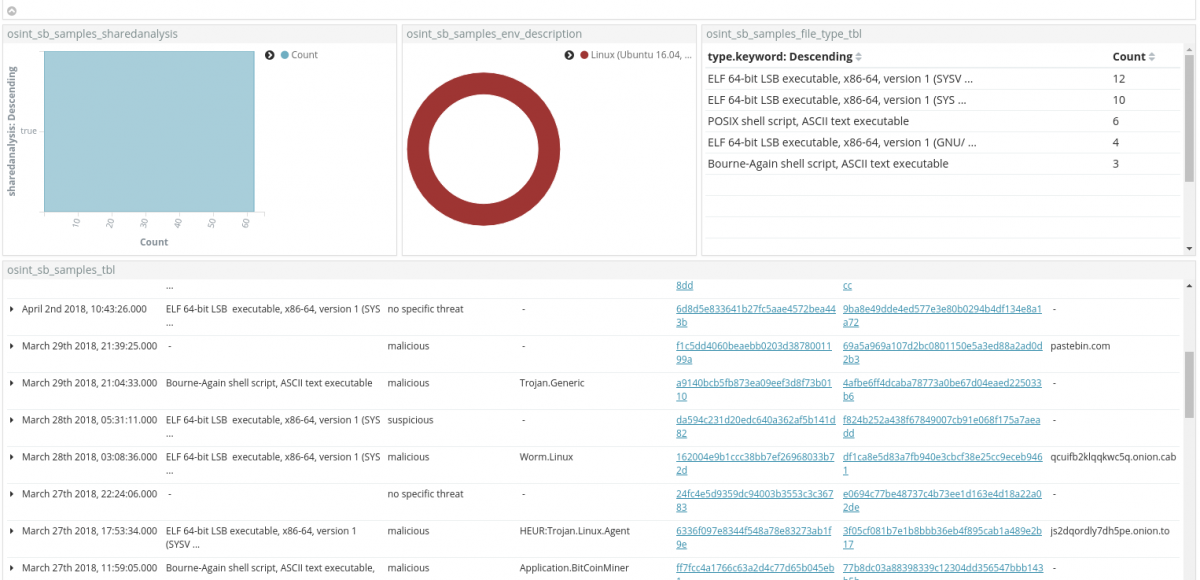

We start data discovery focusing on:

- Linux ELF file.

- Sample showing network traffic to onion domains (e connecting to the TOR network).

Skimming through the results, the first candidate is a sample flagged as Worm.Linux, connecting to qcuifb2klqqkwc5q[.]onion[.]cab .

Clicking the md5 hash, it is possible pivoting into the sandbox online analysis. This is made possible thanks to the Siren Investigate enhanced search results and its click handler feature, that enables URL string translation into a clickable object.

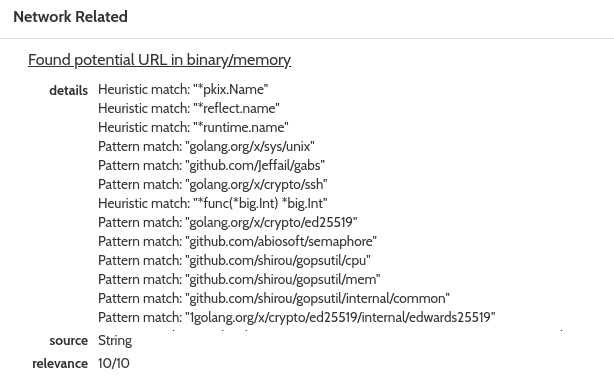

Stepping into the online report and focusing on the Network Related tab, it is also possible to analyze potential URLs observed in the binary/memory sample. Here is a snippet of the content:

At first glance, elements that grab attention are:

"onion.to/?if-unmodified-sinceillegal""onion.cab/?invalid""onion.link/?in"golang.org/s/cgihttpproxya"

The Golang string is of particular interest, giving a hint about the language, or at least part of the library, that was used to code the malware.

Scrolling the Network Related tab, more strings are also visible, shedding some light on the inner libraries and hard-coded values embedded in the specimen:

... Heuristic match: "*runtime.name" Pattern match: "golang.org/x/sys/unix" Pattern match: "github.com/Jeffail/gabs" Pattern match: "golang.org/x/crypto/ssh" ... Pattern match: "golang.org/x/crypto/ed25519" Pattern match: "github.com/abiosoft/semaphore" Pattern match: "github.com/shirou/gopsutil/cpu" Pattern match: "github.com/shirou/gopsutil/mem" ... Pattern match: "army.html.navy/proc000001111112345156255432178125::/96:path" Pattern match: "localhost.police.uk/dev/stdin/etc/hosts/setgroups072752712210.0.0.0/811.0.0.0/811223344551220703125123456789012345qwert1q2w3e4r5t21.0.0.0/822.0.0.0/826.0.0.0/828.0.0.0/829.0.0.0/830.0.0.0/833.0.0.0/855.0.0.0/86103515625"

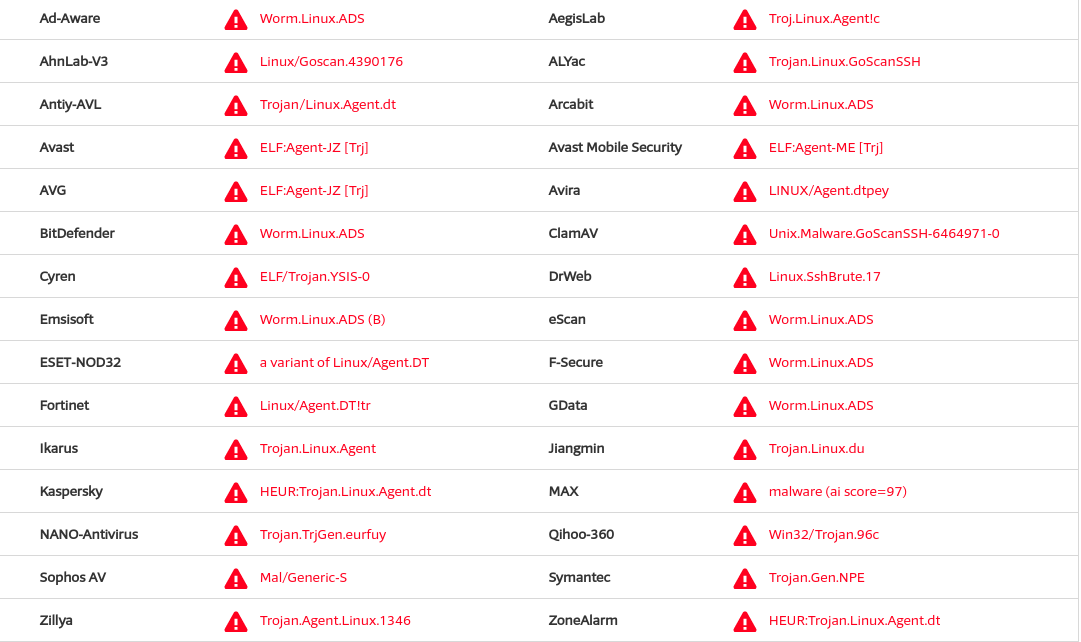

AV lookups

VirusTotal is a mandatory stop for checking AV vendors signature labels. Looking up for 162004e9b1ccc38bb7ef26968033b72d yields:

Interesting signatures that shift from generic nomenclature, are:

- ClamAV:

Unix.Malware.GoScanSSH-6464971-0 - ALYac:

Trojan.Linux.GoScanSSH - AhnLab-V3:

Linux/Goscan.4390176

Quick web search

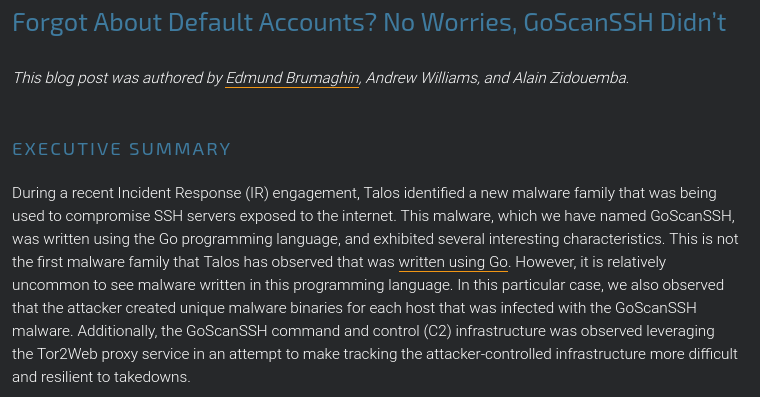

A quick web search with keywords such as golang, goscanssh and linux returns among other results a CISCO Talos analysis.

The article gives all the necessary information and drill-down about the malware family and inner workings.

Back to Siren

At this stage, after adding additional context around the sample and confirming its unique and interesting features, we can move back to the Siren system and fire up the graph browser.

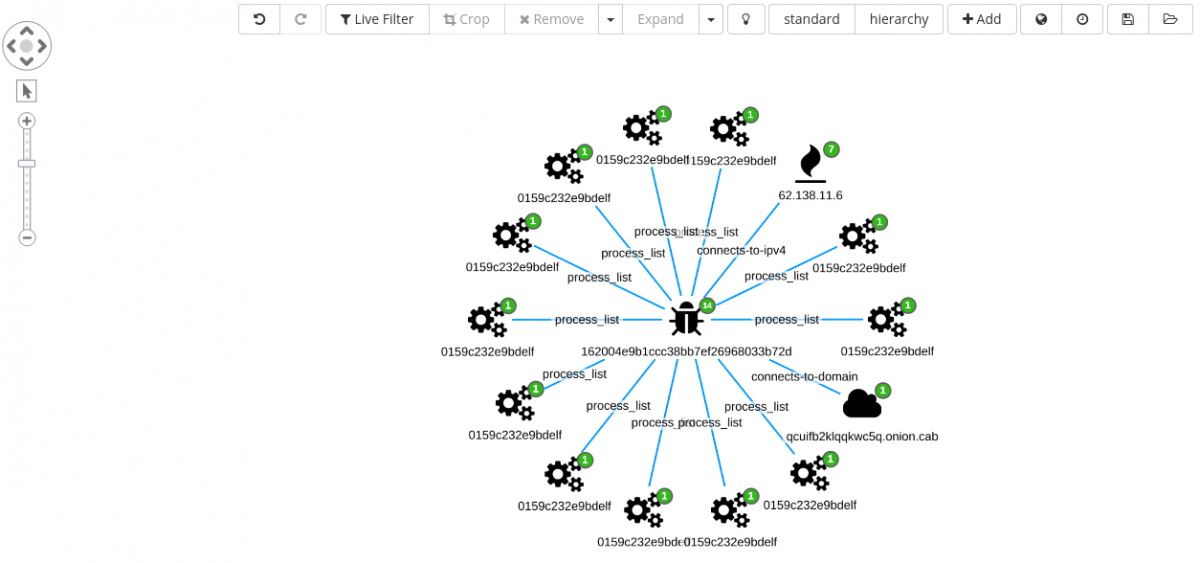

Filtering for the hash 162004e9b1ccc38bb7ef26968033b72d and adding the associated index, adds just the single sample into the graph. From here we can expand on connected objects.

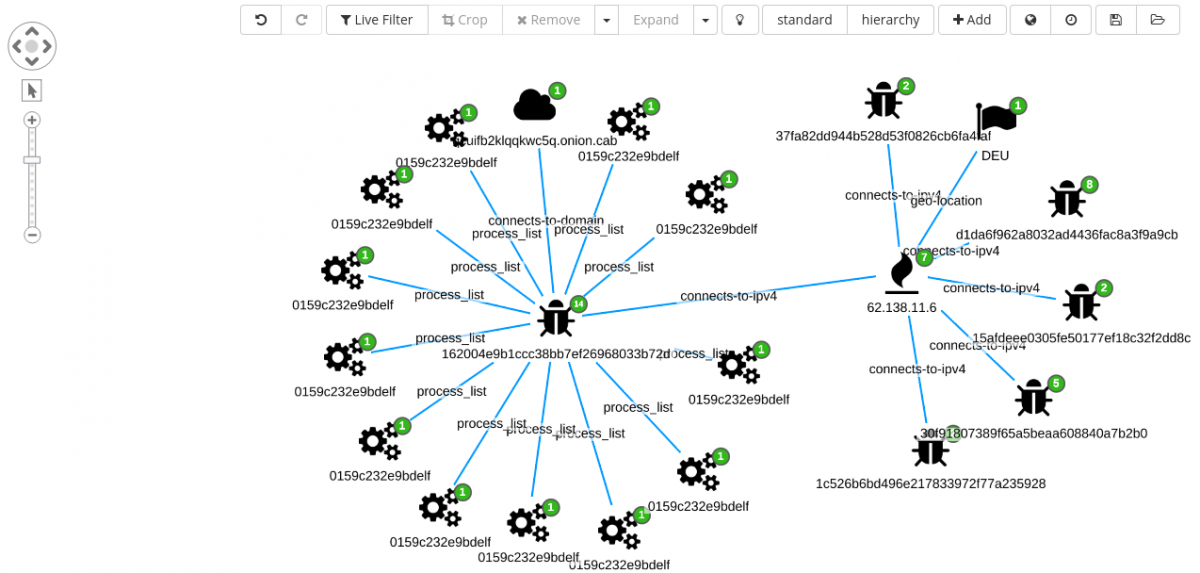

Looking at the new edges, the investigation can move forward expanding the IP 62.138.11[.]6 node, bringing into the graph six additional nodes.

A total of five new samples are revealed:

D1da6f962a8032ad4436fac8a3f9a9cb37fa82dd944b528d53f0826cb6fa4faf30f91807389f65a5beaa608840a7b2b015afdeee0305fe50177ef18c32f2dd8c1c526b6bd496e217833972f77a235928

Each new node can be expanded and analyzed.

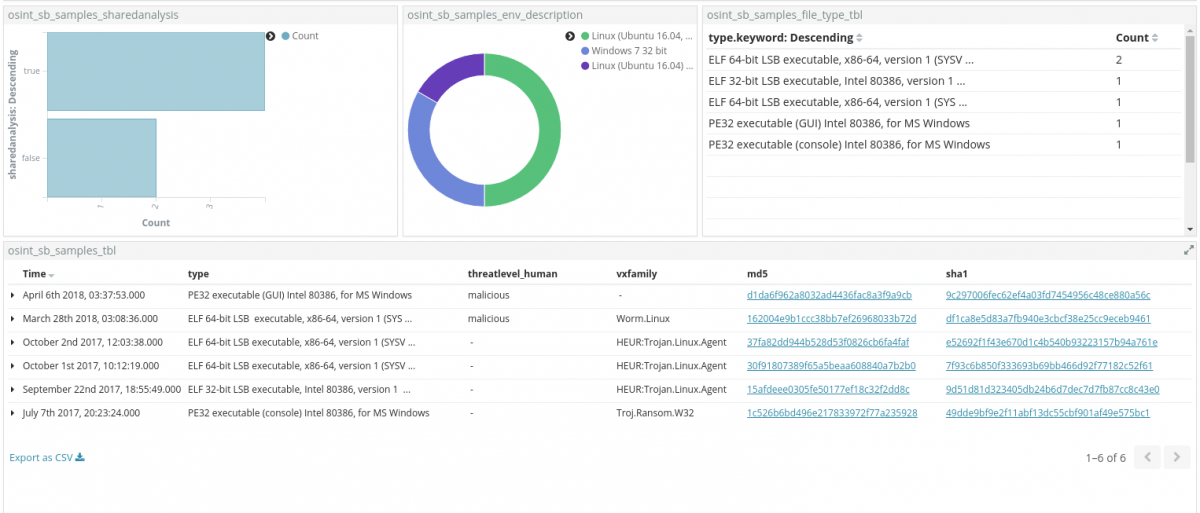

As a next step, we can select all the md5 nodes and move the analysis back to a dedicated dashboard for a final inspection.

Results here are after filtered based on the shared analysis field. This helps in selecting only samples that are shared and freely downloadable from the hybrid-analysis platform.

Excluding the Troj.Ransom.W32, that does not fit with the current investigative criteria, because it is for a Windows OS environment, all the other samples in the table look promising.

Looking at the technical details available in the online reports of the sandbox system, we get confirmation that the malware are indeed all from the same family.

From here, further possible steps include:

- Download all (publicly) available samples.

- Check samples for unique properties.

- Write a YARA rule on the top of the discovered static properties.

- Scan a public or private malware corpus.

- If the malware corpus metadata is stored in Elasticsearch, as we will see in one of the coming use cases, update Elasticsearch documents based on YARA detection.

- Install the new rule into any security solution in use at the company.

- Build a Sentinl watcher for Go malware and monitor the systems for new samples.

We will now focus on the last effort in the coming section.

Siren Alert watcher

Unfortunately, the feed coming from the online sandbox system is not providing the network related content that we observed in the previous section.

It goes without saying that it would be a quick win being able to create a Sentinl watcher that triggers on such set of strings. The feed though contains multiple sections related to the network activity generated by a sample.

Among others, the one related to the intrusion detection system (IDS) can come in handy for this particular use case.

Each IDS rule has a description section, basically a human understandable explanation about what the rule is triggering on. We can rely on specific strings in the description to star tracking sample written in Golang.

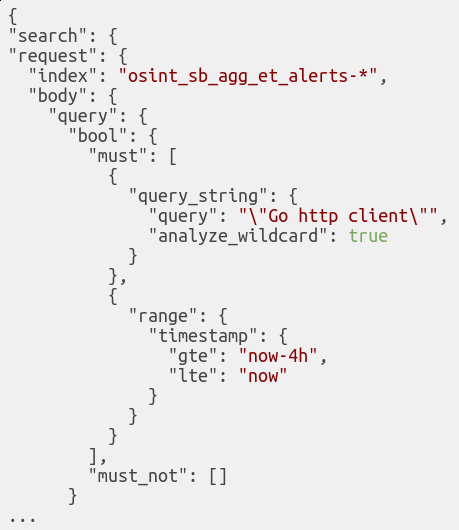

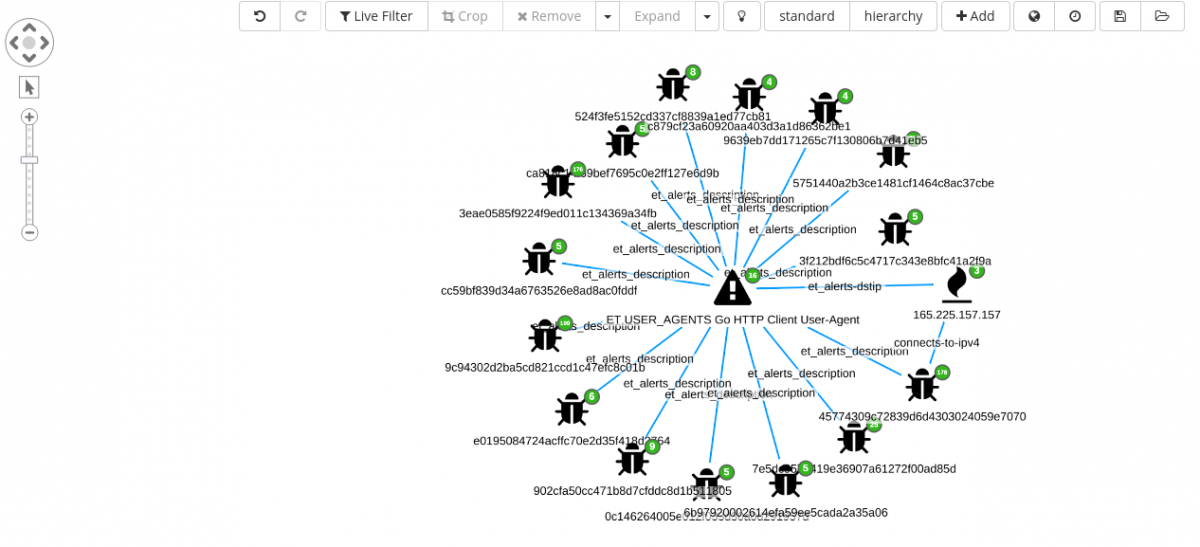

Given a rule description such as ET USER_AGENTS Go HTTP Client User-Agent, we can just search for “Go HTTP client”.

Even if simple, it is a good starting point from which we can begin.

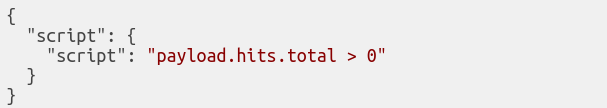

A basic Sentinl watcher is made up of Input ⇒ Condition ⇒ Actions.

INPUT

It defines the searching criteria and the time-frame to process at every execution.

CONDITION

It defines in a rule how many hits should be counted before creating an alert.

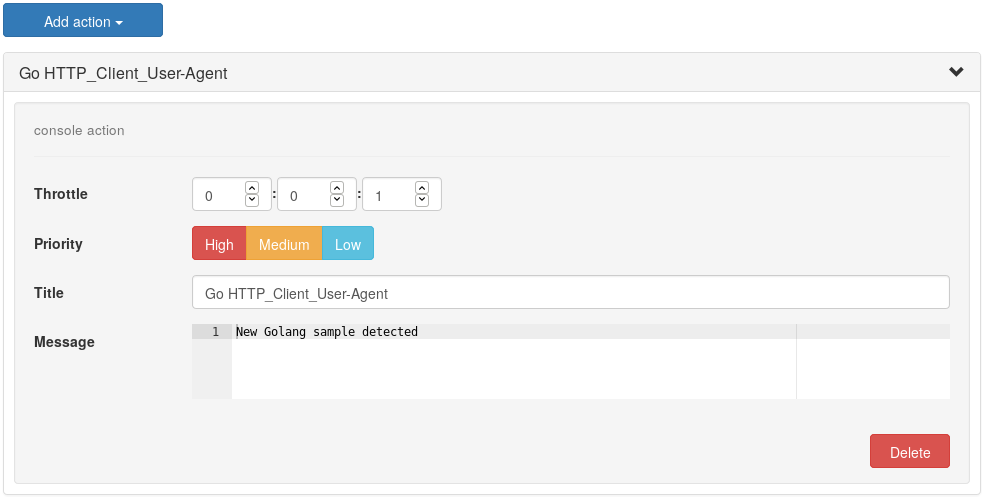

Actions

Each action together with its priority, is chosen when a threshold is reached.

After it is saved, the Sentinl engine will start monitoring the defined indices at the defined intervals and any new alerts will become visible in a dedicated index, named watcher_alarms-*.

On the top of this, a dedicated dashboard can be created. Nevertheless, if in search of 24/7 alerting system, Sentinl also gives the possibility to generated emails or Slack messages directly from the Actions list.

On one hand, we can use Sentinl for generating alerts on new incoming data. On the other hand we can also leverage Siren graph capabilities to hunt for sample triggering, for instance, on the same IDS rule.

Go HTTP client user-agent

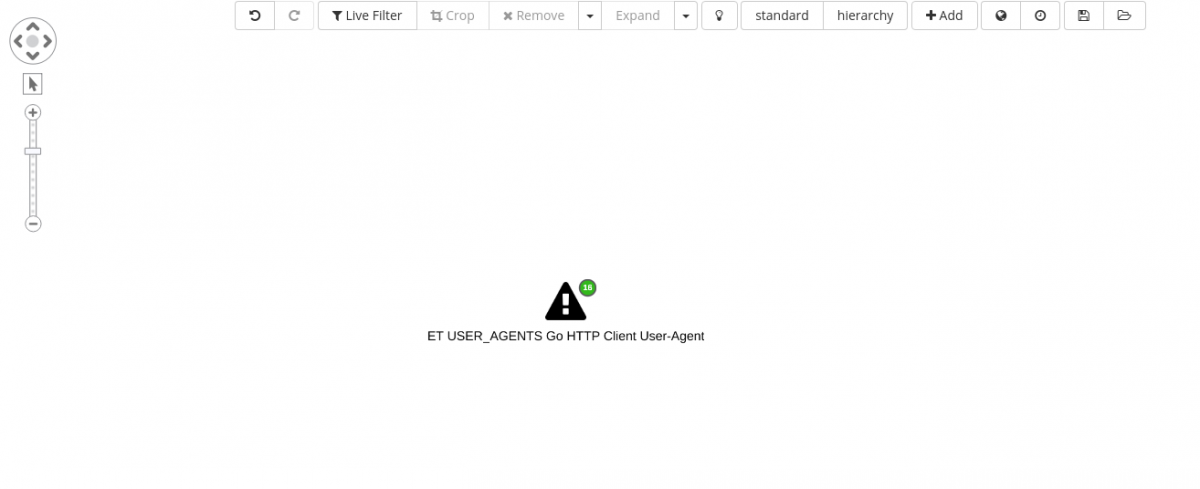

We close this use case, visualizing any specimen observed by the sandbox system, that has triggered the same rule for which the Sentinl watcher was created in the previous paragraph.

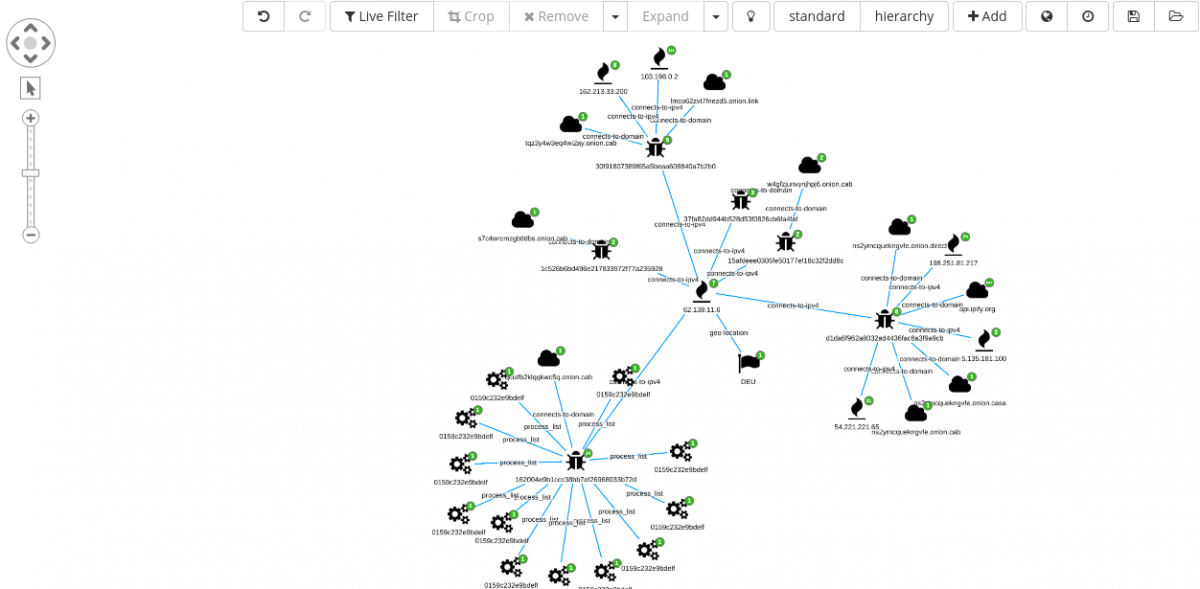

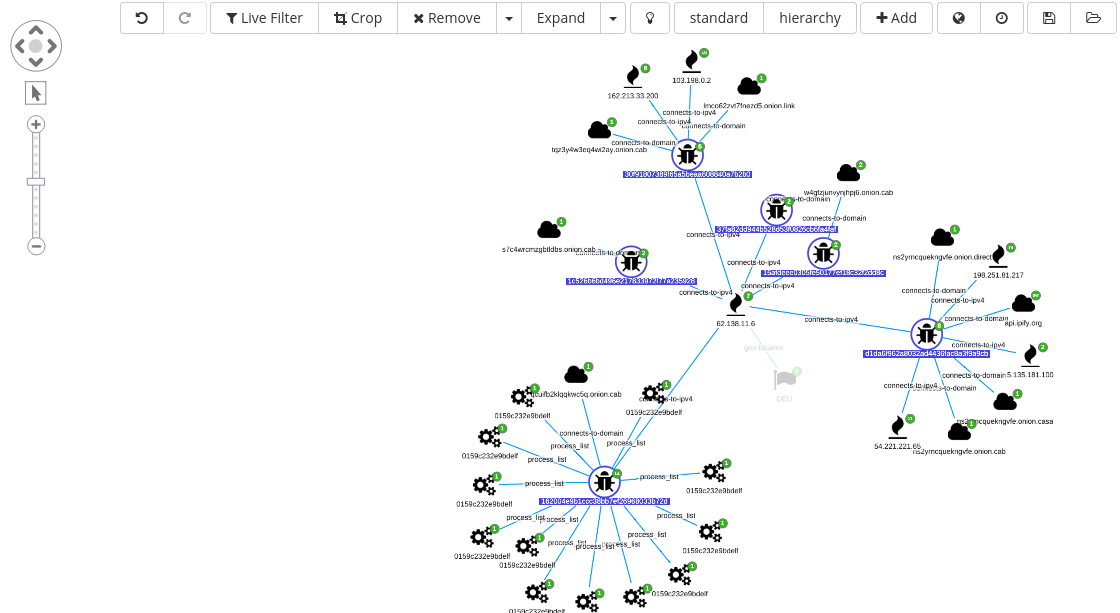

Adding the IDS signature “ET USER_AGENTS Go HTTP Client User-Agent” to the graph shows already 16 connected elements.

Out of the 16 new nodes, 15 are file hashes and the other is an IP address.

Selecting only the samples (file hashes) and expanding on the same, from the crowded view we can see new edges such as:

- Additional NIDS alerts.

- Extract files.

- Domains.

- New IP.

- IP geolocation.

From this perspective, it is possible to start removing unnecessary edges and focus on interesting elements, for instance, malware sharing:

- C2 infrastructure.

- Process artifacts.

Having achieved a satisfactory result, the graph can be saved and reopened at later stage or added to a new graph built on the top of another investigation.

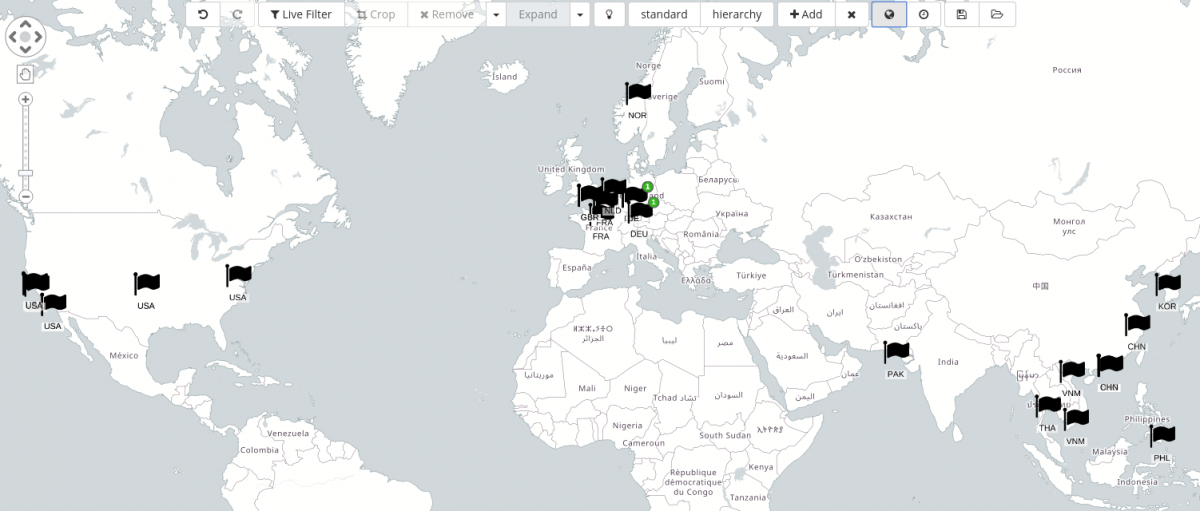

Finally, because for each IP address geo-coordinates are also present, we can display their location also on a map, as always, from within the graph.

Case 2 – GandCrab ramsomware

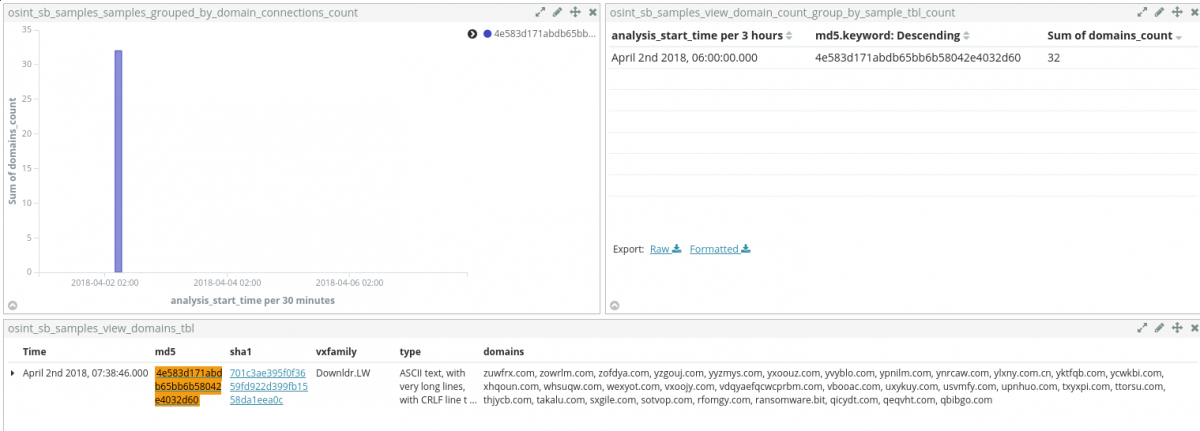

This time, data discovery starts from a Sentinl watcher. Specifically, the crafted watcher triggers on any sample that is generating a high amount of DNS requests.

Skimming through the list of domains, among many, ransomware[.]bit stands out from the crowd, mostly because it is using a .bit top-level-domain (TLD), more on this in the coming section.

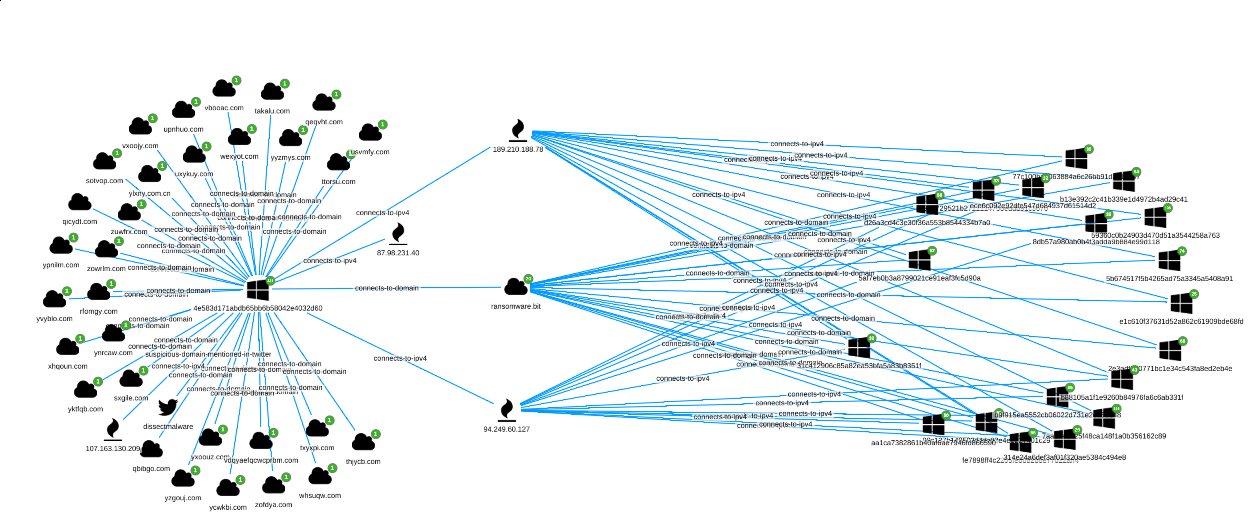

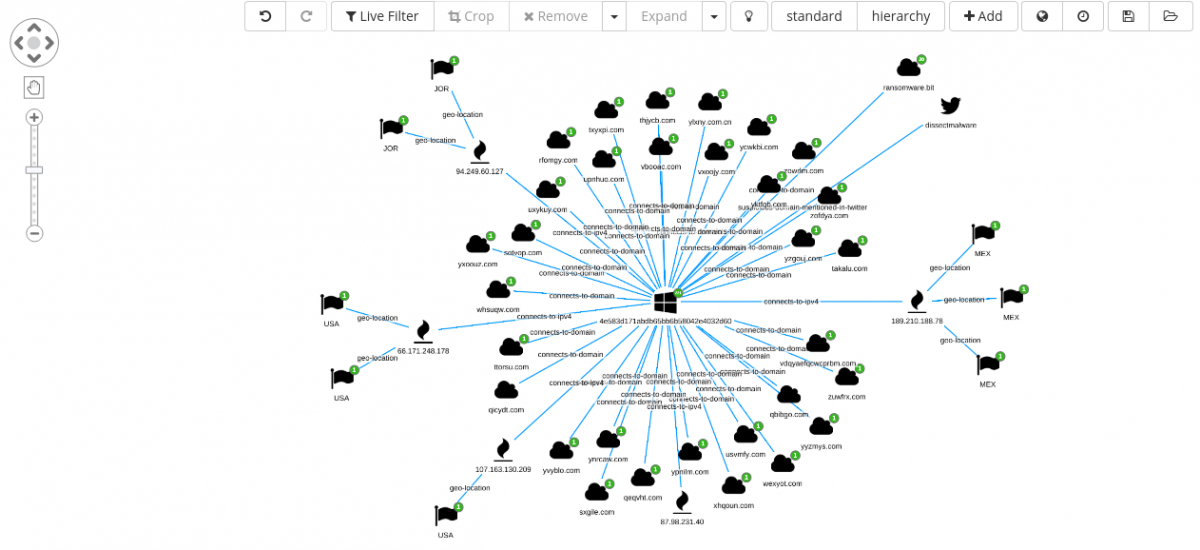

We can immediately add the sample 4e583d171abdb65bb6b58042e4032d60 to the Siren Graph Browser and start investigating.

From the above view, we can confirm:

- There is at least one Twitter message (reporting the observed file hash).

- The

ransomware[.]bitdomain looks connected to other 19 samples.

Investigating through the dedicated Twitter dashboard, it can be noted how the author of the tweet gives some hint about the infection and chain of execution, that is #Obfuscated #JS -> WScript -> dl #PE, plus for this case, also the link to the online sandbox analysis of the sample.

Quick web search

A quick web search points us to a Malwarebytes article, that analyzesthe ransomware family in depth.

Back to Siren

At this stage, as previously done for the GoScanSSH malware, we can move back to the Siren system and fire up the graph browser.

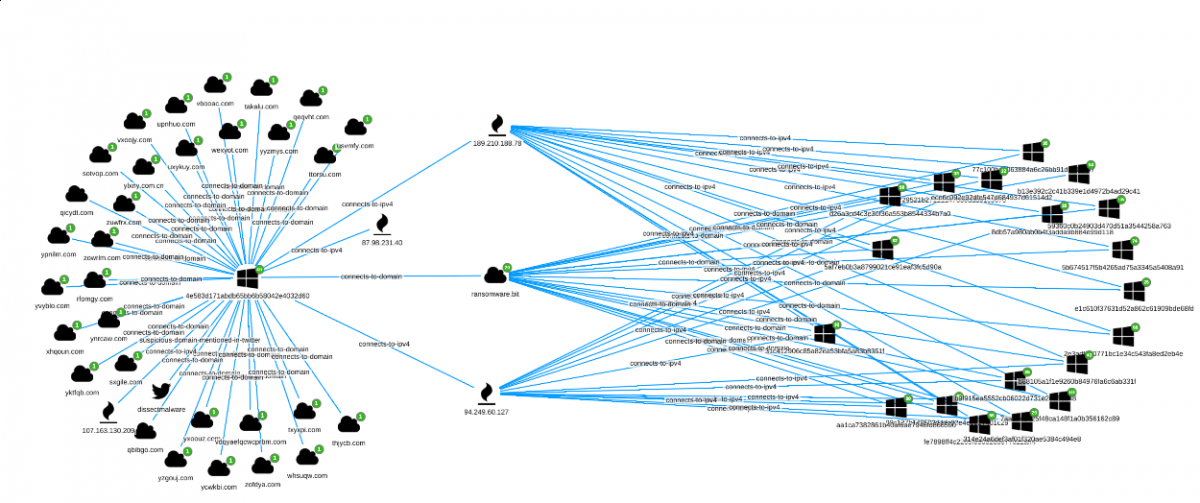

Pivoting on ransomware[.]bit yields 19 additional samples (at the time of the analysis).

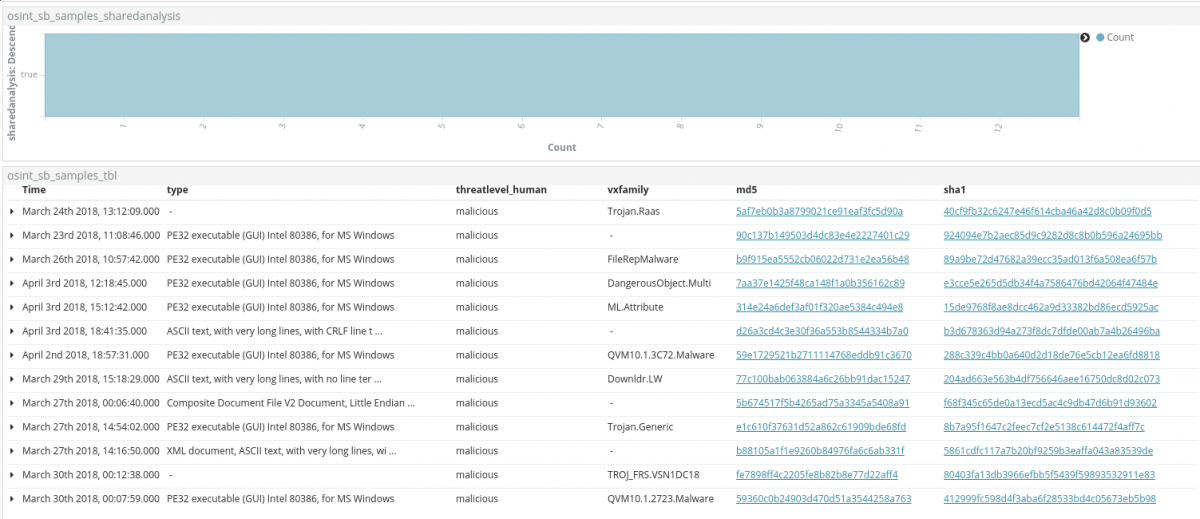

As for the previous investigation, each new md5 node, representing a malware sample, can be selected and inspected with a dedicated dashboard.

Samples are filtered on the shared analysis field, giving the possibility to highlight only reports for which a malware can be downloaded.

From here, as outlined in the GoScanSSH case, we could:

- Download all the (publicly) available samples.

- Check samples for unique properties.

- Write YARA rule (detecting the dynamically unpacked sample).

- Add the new rule to any security solution available in your infrastructure able to work with such signature.

Investigating .bit domains and associated samples

The GandCrab ransomware command-and-control (CnC) channel relies on a .bit top-level domain (TLD). Such TLDs are not regulated by the Internet Corporation for Assigned Names and Numbers (ICANN) and they can be purchased using a crypto currency named Namecoin.

Dot-Bit-enabled (.bit) websites have, among other advantages, the unique features to be untraceable and DNS decentralize, which makes them interesting for such cybercriminal activities.

If we were interested in investigating any sample using such TLD schema, if applied to the right Elasticsearch index, we would be just one query away from the result.

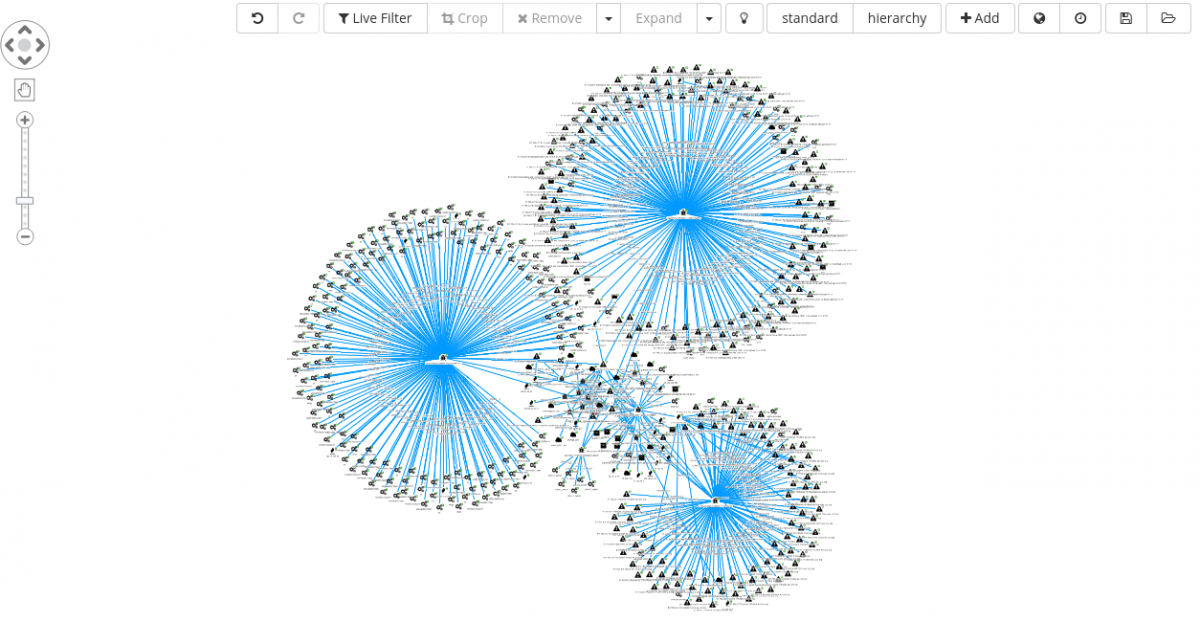

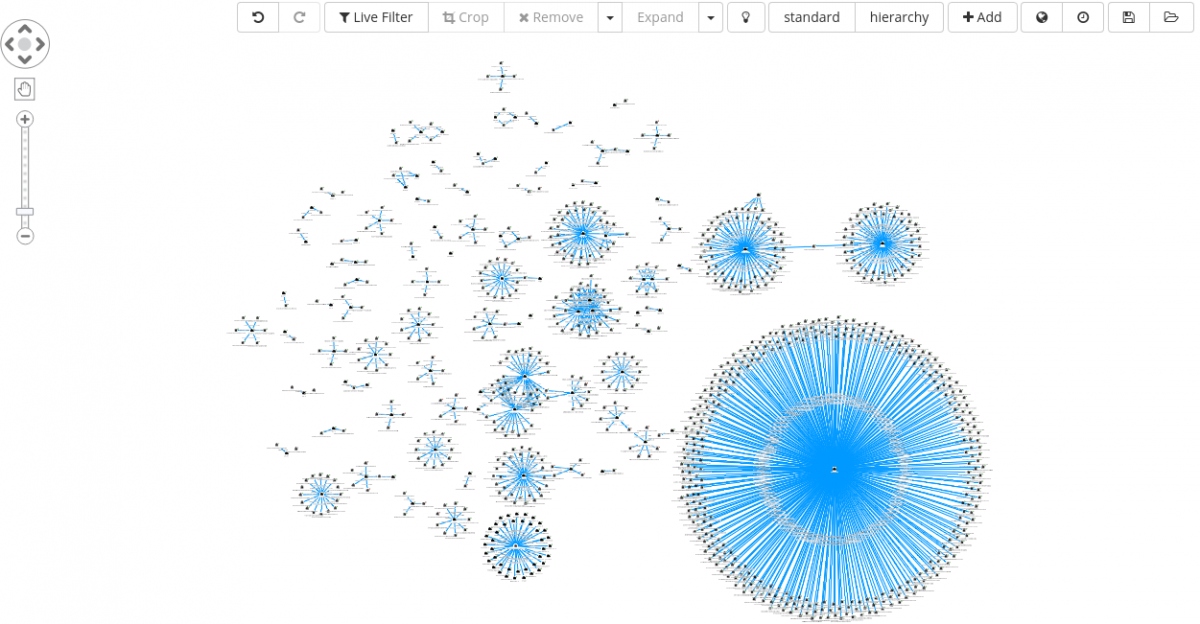

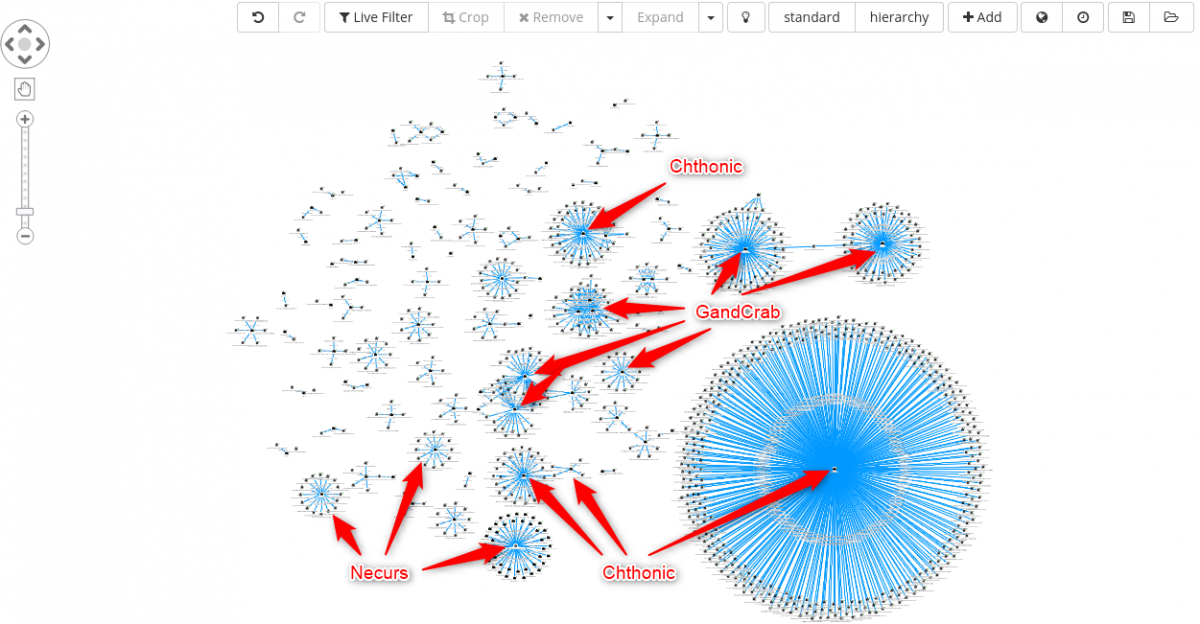

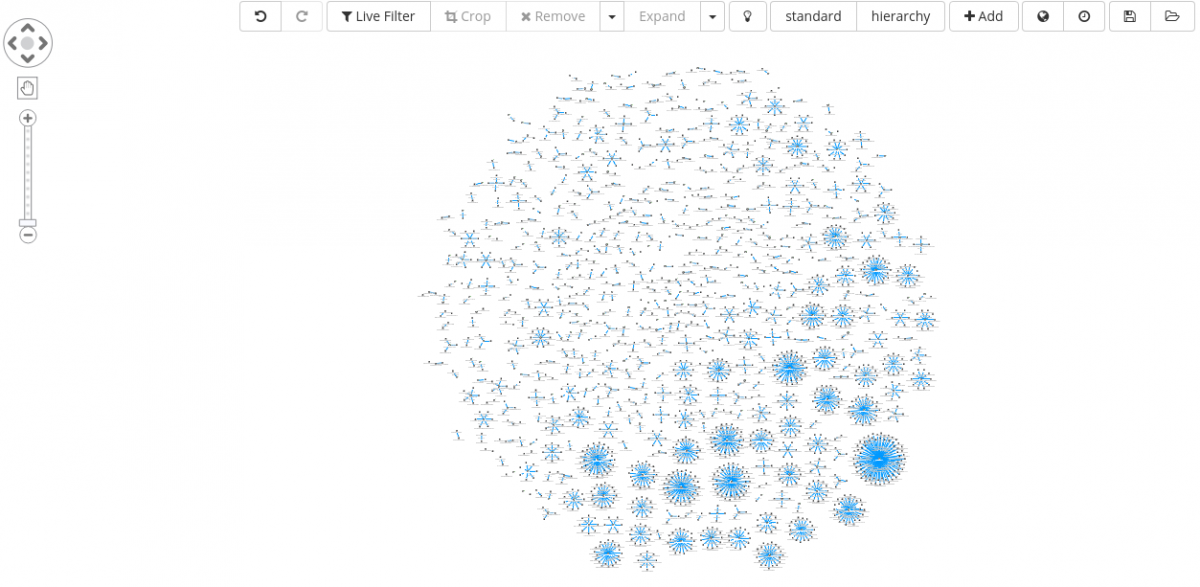

Simply searching for *.bit, from within the Siren Graph Browser, returns 136 domains (at the time of the analysis).

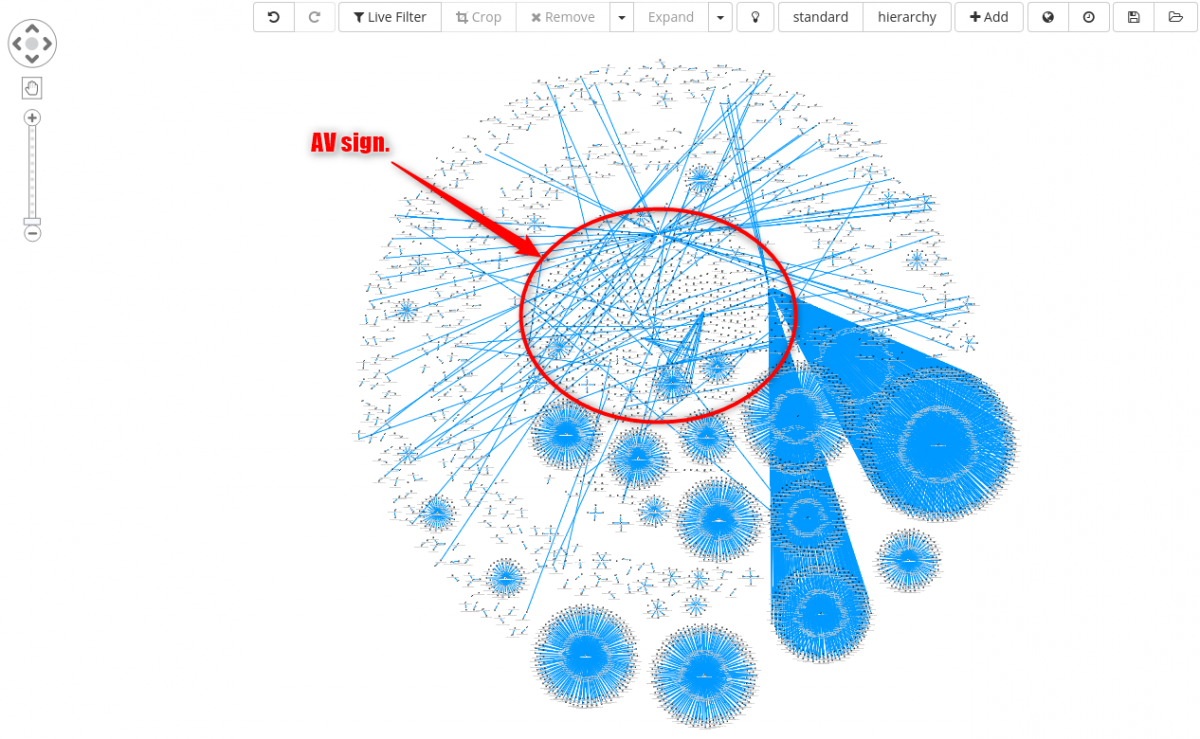

Selecting all of them, and Expand on the same, we are greeted with the following set of clusters.

Analyzing each cluster, one by one, we can start identifying some unique malware family, such as:

- Chthonic

- GandCrab

- Necurs

Case 3 – Static based malware clustering

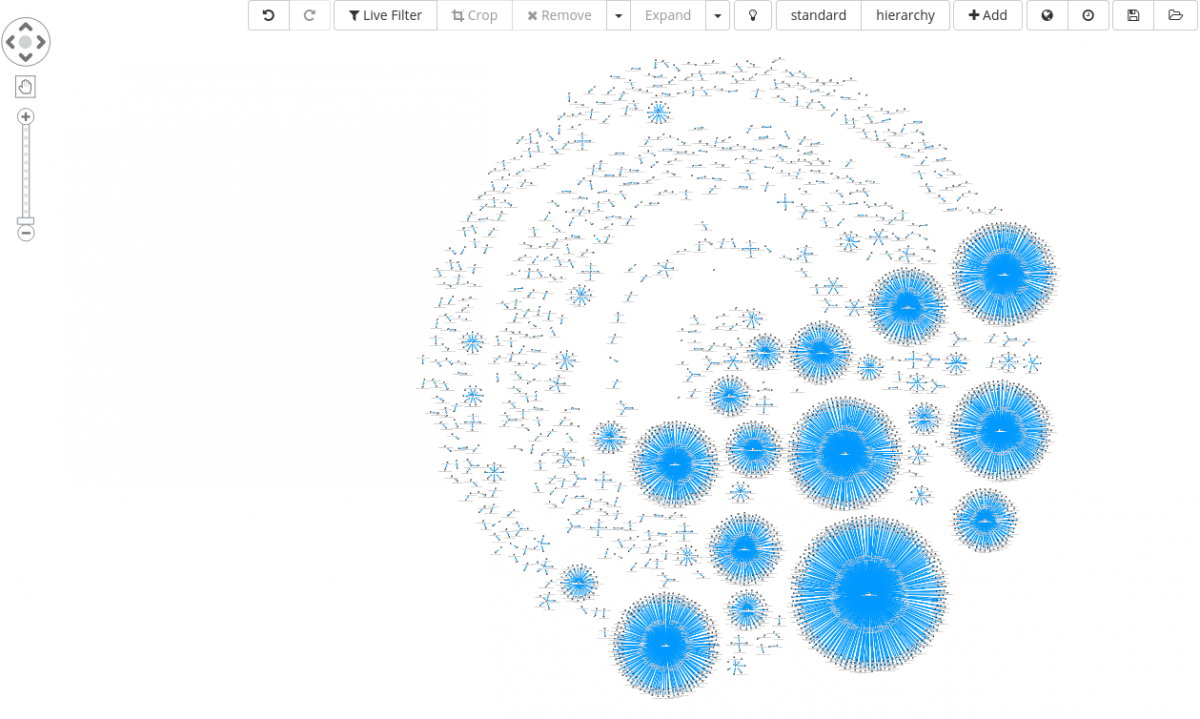

We close the article giving a quick overview on a relatively small subset of roughly 30.000 malware samples extracted from a malware zoo. As the name suggests, it is essentially a space where malware is stored.

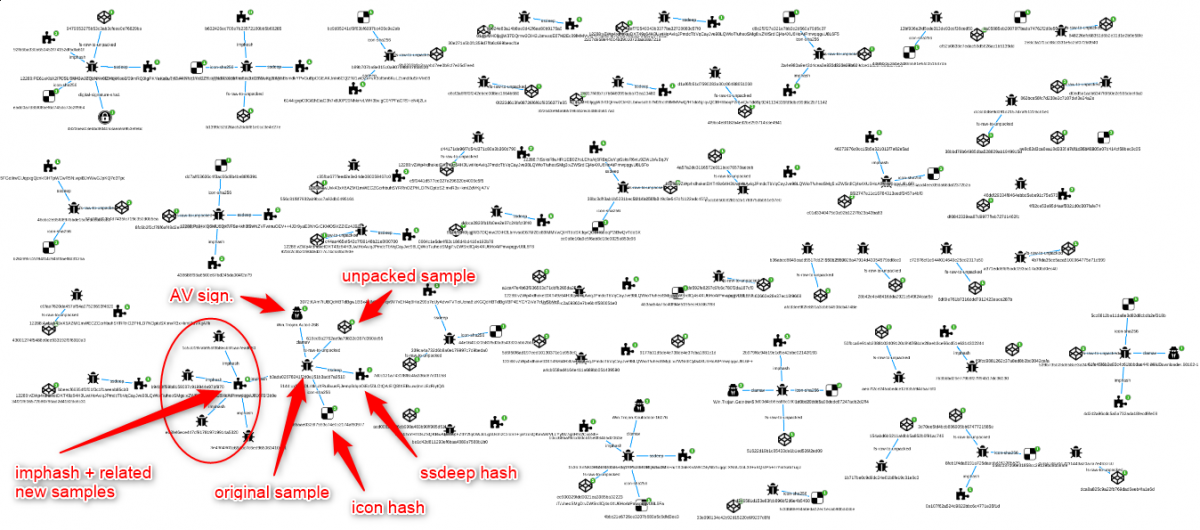

If you recall, at the beginning of the article when the system modules were introduced, one of them was labeled as File Parser. Its main task is to extract static features from Windows executable files, enrich the analysis with additional plugins when possible and generate a machine-readable report.

It goes without saying that, if the right features are collected, it becomes possible with a certain degree to group together samples sharing the same properties.

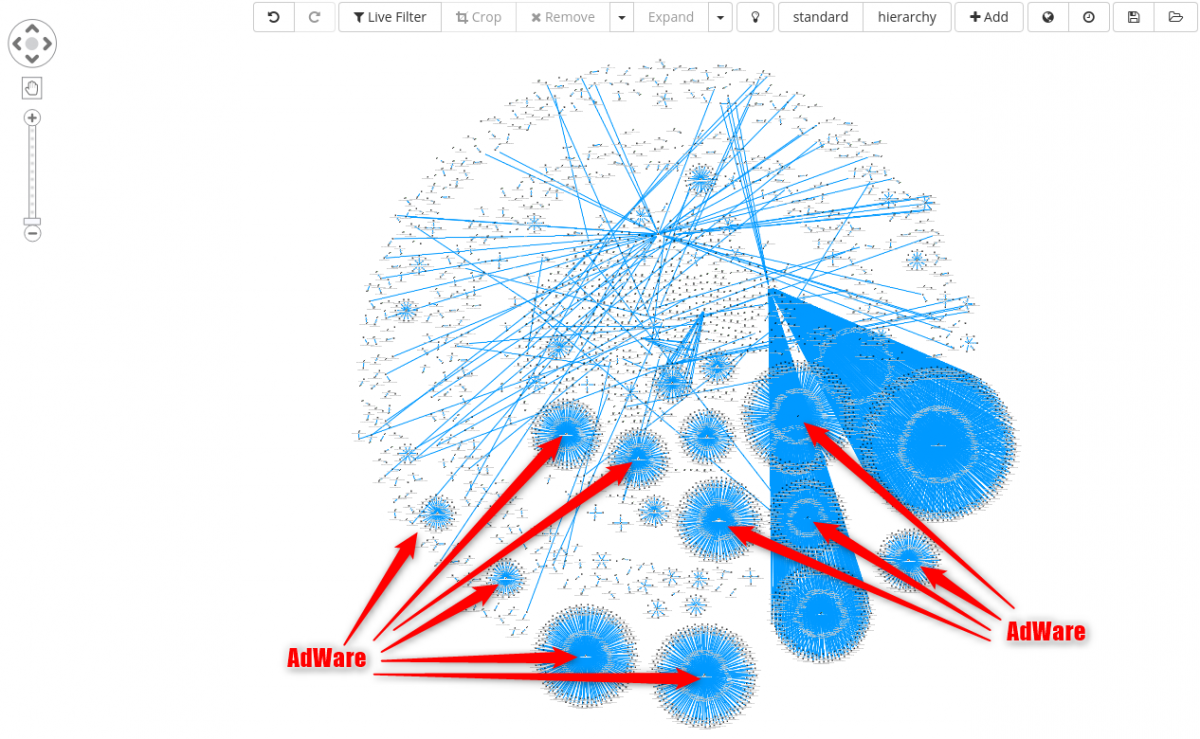

Depending on the objectives, clustering as you will see can also be a good starting point for cleaning non interesting malware categories from a malware storage, that is identifying adware and variations of the same.

For instance, sample could be clustered based on their file icon.

The same cluster can also be further enriched adding antivirus signatures as seen below.

From a quick check, all cluster highlighted below are connected to some sort of adware family.

From a quick check, all cluster highlighted below are connected to some sort of adware family.

Assuming we are not interested in such category of malware, next possible steps are:

- Write a YARA rule for filtering out such family from the corpus.

- Add the new YARA rule an in-house sandbox rule repository, so to tag any new sample with the same characteristics.

- Re-scan malware corpus.

- Update sample tags with the new signature.

The File Parser module is also statically unpacking file (UPX packed), which gives the possibility to pinpoint an original sample with its unpacked version. As for the previous case, clusters can be enriched with the following:

- Icon hash.

- Imphash.

- Ssdeep.

- AV signatures.

- Digital signature.

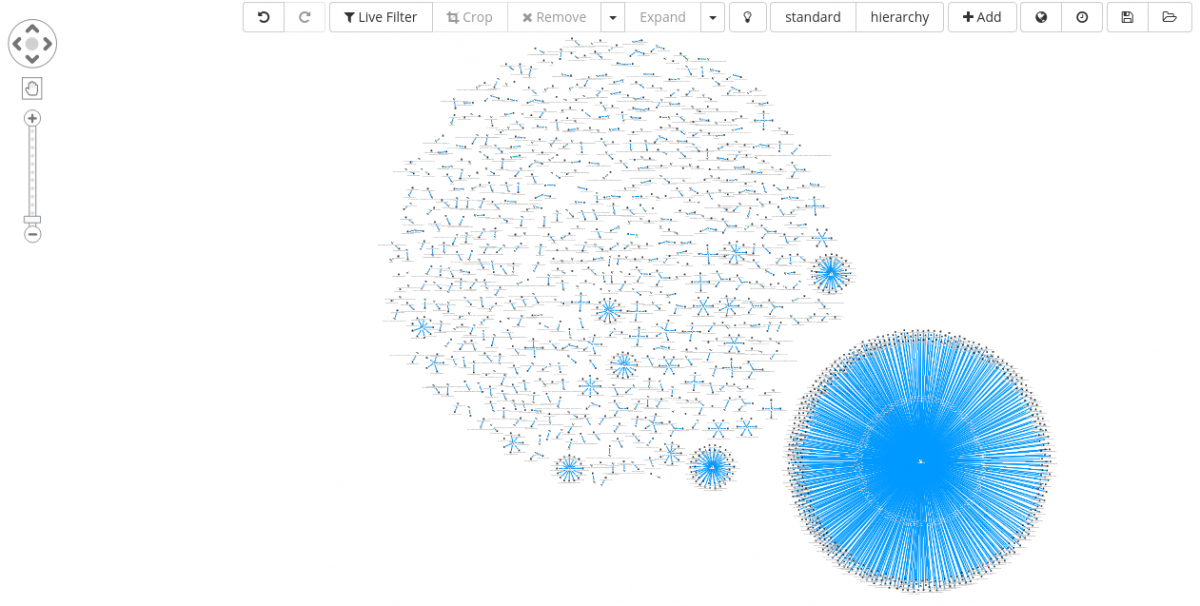

Some additional examples can be given, clustering for instance on sample’s absolute path name of Program Database (PDB) and digital signature.

Absolute path name of PDB

Clusters shown in this section can be built in an easy and straightforward way after defining an appropriate data schema and objects relations (field A : index #1 → field B : index #2).

If manually exploring a given data set, it becomes very intuitive to compare its elements, like in these scenarios, instead of reviewing histogram charts or similar graphical representation of data.

With that said, it does not mean that graphs are the solution for any kind of problems, but they do empower user to naturally explore heterogeneous cases that well fit such visualization approach.

Closing thoughts

On the top of the presented use cases, you should start getting a better understanding about how you could use Siren for your day to day data exploration and analysis workflow.

In any case the presented scenarios are just the tip of the iceberg and much more can be squeezed out from the data.

In some of the previous investigations the pivot point that was driving the analysis in a new direction or giving more context was an IP address, a domain, a Twitter message or static similarities between files, but also of particular interest would be the collection and the analysis of:

- Additional and more reliable properties of a given sample gathered from an in-house sandbox system.

- Log events coming from network perimeter devices tied together to malware network behaviors.

- Endpoint logs mapped to unique malware activities observed inside a sandbox during dynamic execution.

Applying a reliable analysis process of logs and creating a robust schema for data linking are just some of the first and basic steps to follow while creating a system that can support your daily discovery work.

The heavy lifting of the remaining tasks is completely left to Siren, giving free time to an explorer to spot interesting anomalies that deserves further investigations.

Contributing author: Patrick Pellegrino

Interested in Siren for supercharging Elasticsearch in cybersecurity and operational log analysis? See this blog post and our cybersecurity specific page.